“Set your channel gain to 11-12 o’clock.”

Telling people to leave their trim-gain knobs at one physical rotation spot (or narrow range) is bad advice. That only worked for him because he was using test signals of known level.

People need to be trying to stay out of the top two meter LEDs on all this gear. Second-to-top LED is really only for accidents, since once you’re in it you have no way of knowing how far from clip you are. I realize in this case if the current second-to-top LED on the GO encompasses a whole 18dB, that’s asking a lot. If you’re tempted to go into the meter zero on the GO, frequently back the level off and test how deep you’re into it. Obviously InMusic changing the GO meter zero to a higher value and the LED encompassing a smaller range (say, some amount 3 to 9dB… take your pick) like they do on Numark digital mixers with similar meters would be less tempting to go into the second-to-top. Then you gotta alter the scaling of the LEDs below. I don’t think anyone will complain about the lower LEDs’ markings below zero no longer being accurate.

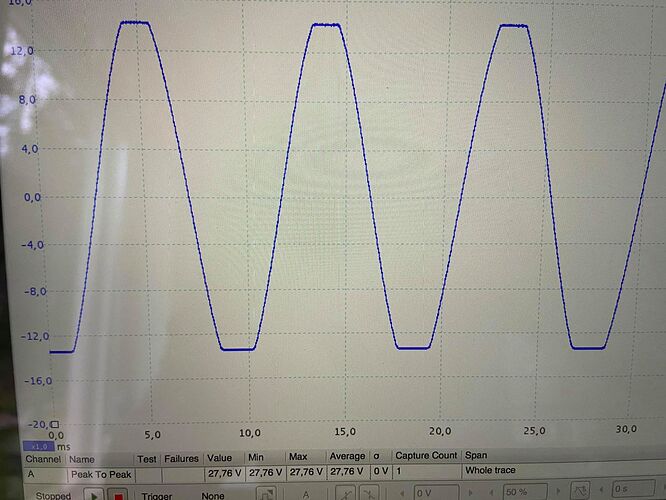

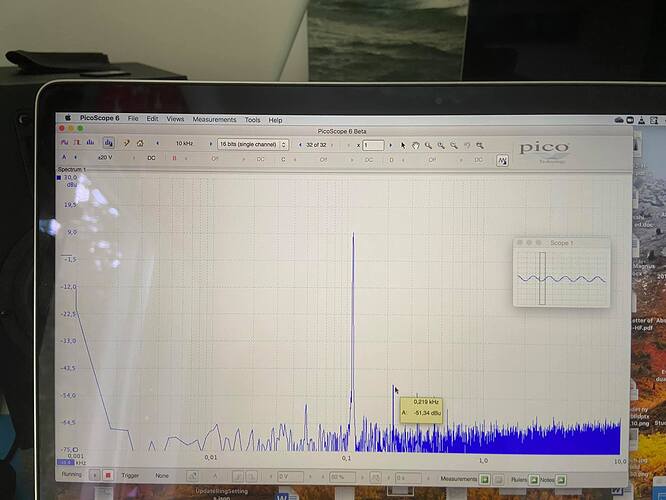

“if you increase the channel gain past 1.30 o’clock (see settings in picture below and also the harmonic distortion of the 110 Hz signal in the FFT plot) there WILL be significant harmonic distortion even if the blue VU LED is not lit and there is no hard clipping and I suspect that this is what the Germans did. But WHY on earth would you do this???”

That’s strange, and not what the Germans did, but that the GO is doing that. Is there a defeatable compressor/limiter turned on in the GO? That should definitely be off when running tests. Did he have the tone controls’ isolator mode on? Tell him to switch it to EQ and run the tests again. Isolators are summed crossover-style filters and add a little group delay phase distortion. The X1800 has a nifty bypass for the iso (at least it did the last firmware I checked it on) when the tone controls for a channel are centered, but I don’t know that the GO has that. The X1800 has the top meter LEDs as -1dBFS. So if it’s also not the iso he’s seeing, either, the other explanations are something like inter-sample clipping during oversampling… or even the gain structure somewhere in the virtual signal path (it’s DSP stuff, after all) being even worse than anticipated… or there could be a design screw up in the GO analog output stages… or an issue even on his own ADC stage. Did he ensure he was not clipping his interface’s inputs? Some guy on YouTube did a measurements comparison of a Pioneer DJM and a Xone and was clearly clipping his interface inputs and claiming one of the pieces had a problem with its levels, and that was done for one of the major online DJ rags!

He also didn’t say anything about whether or not there’s ultrasonic garbage past the roll off on the GO. For that matter, he doesn’t appear to have measured the frequency response at all.

I’m not sure what “looks fine” means. A usual IMD test through an analog piece of gear is going to send the raw test signal to the gear and then analyze the output from said gear in comparison. Pioneer CDJs from the mk3-on seem to produce the exact same amount of IMD present in the original test signal at zero pitch when using the SPDIF. The Prime standalone players’ digital audio processing adds 100X the intermodulation distortion of the original signal in RMAA. The comparison to the original signal measurement is what matters: how much more IMD is there compared to original.

A couple single tones also don’t tell you how low the nonlinear distortion harmonics are. Hint, they’re nonlinear because their ‘multiple’ changes as the fundamental changes. They expand or squeeze as the sweep progresses. You have to do a complete sweep and look at the real time FFT. This can be recorded in a video. His single pic does demonstrate the possibility the GO’s overall harmonic distortion from audio processing is lower than the last time I tested the standalone players, though, but doesn’t fully demonstrate it.

If the GO’s IMD and nonlinear distortion harmonics are all lower than the standalone players, then it could possibly be the result of GO simply doing all the processing at 44.1, 48, or some near multiple on the unit instead of doing it at 24/96 or some huge multiple of 96hz that the standalone players seem to be and not having to bump it back down for the SPDIFs. Since the GO seems to only support up to 48, right, and has no SPDIF out, that would be one explanation if that’s the case. That would also mean that InMusic should be easily able to give us the ability to either change the rate that the standalone players’ are using or let the layer automatically change its rate to match the file, since the GO would be proof they’ve demonstrated they can run with less SRC mucking things up. So that would definitely be a very good sign for all Prime users… sort of light on the horizon.